Job skills assessment: How to evaluate workforce capability objectively

Job skills assessment covers two different use cases: screening candidates before hiring, and measuring the capability of your existing workforce. This guide focuses on the second. Learn the five assessment methods, how to run a structured assessment in five steps, and what evidence regulated industries need to stay audit-ready.

The term covers two very different use cases. As a result, knowing which one applies decides what method, what tool, and what outcome you should be working toward.

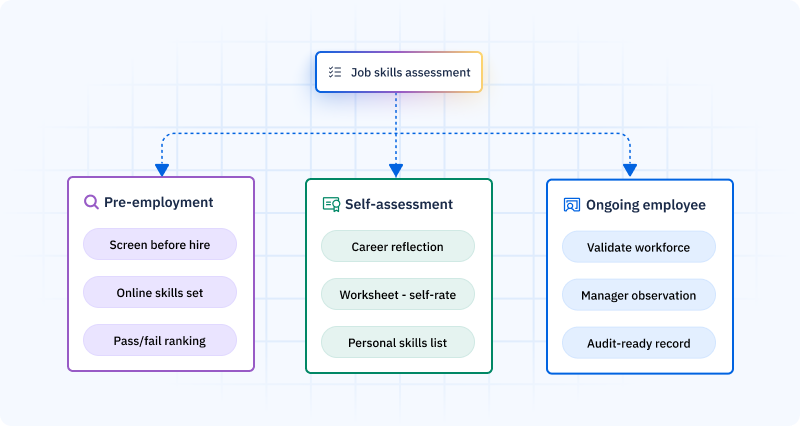

First, pre-employment skills assessment tests candidates before hiring, checking for the presence of specific skills needed for a role. By contrast, ongoing employee skills assessment measures the ability of current employees against role needs, confirming proficiency and keeping a live record of what the workforce can do.

Most online content about job skills assessment covers the first type. However, this article covers the second, because that is where the day-to-day and strategic value lies for HR teams, L&D pros, and operations managers running an existing workforce.

What is a skills assessment?

A skills assessment is a structured process for judging someone’s abilities, measuring what they can do, at what level of proficiency, against a set standard.

Three types of job skills assessmentCopied

Not all skills assessments serve the same purpose. As a result, the method, format, and output you need depend entirely on which of these three types you are running.

| Type | Purpose | Typical format | Output |

|---|---|---|---|

| Pre-employment | Screen candidates before hiring | Online skills test, cognitive check, technical challenge | Pass/fail or compared ranking for hiring decision |

| Self-assessment | Personal career exploration and reflection | Online tool, worksheet, self-rating | Personal skills list for career planning |

| Ongoing employee assessment | Validate workforce ability against role needs | Manager observation, certificate review, technical test | Validated competency record for workforce planning |

This article focuses on ongoing employee assessment: in other words, the structured, repeatable process that produces validated competency data for daily decisions.

This is also where skill assessment software adds the most measurable value, replacing spreadsheets and paper records with a live, auditable system.

Types of employee skills assessment methodsCopied

Different skills need different assessment methods. In practice, matching the method to the type of skill being checked is the main quality driver for assessment data.

Manager observation assessment

Best for: Technical day-to-day skills, safety-critical tasks, equipment operation, practical procedures.

The assessor, typically the direct line manager or a qualified supervisor, watches the employee perform the skill in real working conditions and rates proficiency against set criteria. This method works best when the proficiency criteria are clearly set out in advance. In short, watching real performance in real conditions is the most direct way to measure on-the-job skill. It cannot be gamed, and it does not rely on the employee’s view of themselves.

Certification-based assessment

Best for: Safety certifications, professional qualifications, regulatory licenses.

The employee holds an externally issued certificate that confirms a set skill level, given by a qualified awarding body after a formal assessment process. As such, the certificate itself is the evidence. Therefore, the firm’s job is to track when the certificate runs out, not repeat the assessment.

Technical skills test

Best for: Specific, testable technical skills such as data entry, software use, coding, and language testing.

This is a set test, online or hands-on, that measures whether an employee can do a given technical task to a required standard. However, it is less suited to complex day-to-day skills where on-the-job performance matters more than test performance.

360-degree assessment

Best for: Leadership skills, communication, teamwork, and behavioral skills visible to colleagues.

Feedback gathered from many sources: direct manager, peers, direct reports, and sometimes customers. By contrast, it is less reliable for technical or safety-critical skills where set criteria apply. Above all, it is most useful for skills that are more visible across teams than up the line.

Simulation and scenario-based assessment

Best for: High-risk or high-stakes skills where putting an unqualified employee on the job creates risk you cannot accept.

The employee runs a realistic simulation of a task or scenario, judged by an observer against set criteria. For example, this is used in emergency response, aircraft maintenance, drug production, and complex clinical procedures. Self-assessment has a built-in flaw: research consistently shows that the people who most need to grow in a skill are the ones most likely to rate themselves as skilled. Dunning and Kruger described it formally in 1999. As such, self-assessment belongs in the conversation stage, not the evidence stage.

Hard skills assessment vs. soft skills assessmentCopied

Hard skills assessment

Hard skills are specific, teachable, and measurable. For example, technical knowledge and hands-on abilities tied to a job, such as running a machine, coding in Python, reading engineering drawings, or running a chemical handling procedure.

Hard skills assessment methods include manager observation (for day-to-day skills), certificate review (for regulated skills), and technical test (for specific measurable skills). As a result, the output is a clear proficiency level, typically 1 to 4, backed by observable evidence. For regulated roles, this evidence then doubles as a compliance document.

Soft skills assessment

Soft skills are behavioral and people-based: communication, leadership, problem-solving, and adapting to change. Although they are real skills that affect performance, they are harder to measure objectively.

Soft skills assessment methods include 360-degree feedback and structured manager assessment against set behavioral indicators. However, without behavioral anchors (specific observable signs of what “Level 3 communication” looks like for this role), soft skills ratings are opinions, not assessments.

| Hard skills assessment | Soft skills assessment | |

|---|---|---|

| Nature | Specific, teachable, measurable | Behavioral, people-based, context-driven |

| Methods | Manager observation, cert review, technical test | 360-degree, manager assessment against behavioral indicators |

| Output | Proficiency rating + observable evidence | Rating against set behavioral anchors |

| Used for | Drives deployment and compliance decisions | Informs development planning and succession |

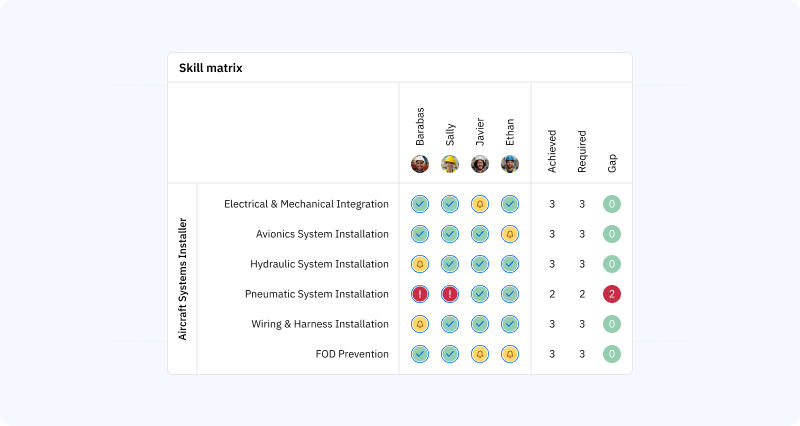

Both types feed into your skills matrix, giving you a complete view of workforce ability across roles, teams, and sites.

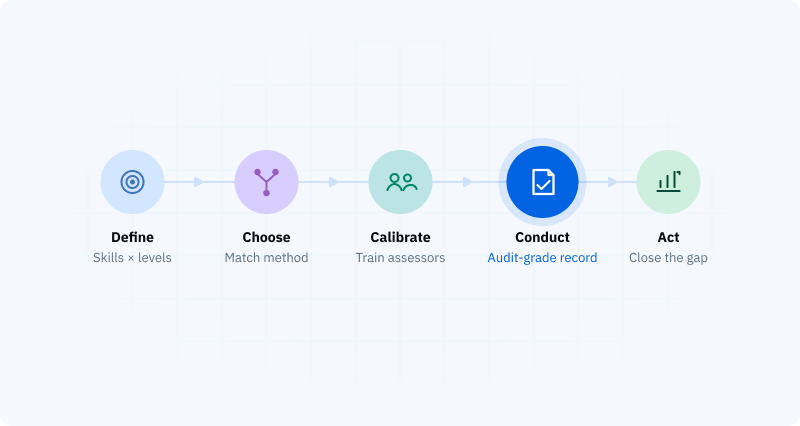

How to run a job skills assessment in 5 stepsCopied

Step 1: Define what you are assessing against

To start, set the competency management framework for the role: what skills does this role require, at what proficiency level, and for what day-to-day purpose? Without a set framework, the assessment produces a rating of skill against an undefined standard. As a result, that is not useful for any decision.

Step 2: Choose the right assessment method

Match the method to the type of skill. For example: technical day-to-day skills, manager observation. Certified skills, certificate review. Specific measurable skills, technical test. Leadership and behavioral skills, 360-degree or structured manager assessment.

Above all, avoid using the cheapest or most handy method for all skills. For example, a forklift operator judged by self-report rather than observed practical assessment is a liability, not a qualification.

Step 3: Train assessors

Assessment reliability depends on assessor calibration. In practice, every manager must apply the same criteria when they rate “Level 3.” Otherwise, without calibration, different assessors produce ratings you cannot compare.

Therefore, assessor training should cover: how to use the proficiency scale, what observable evidence is needed at each level, how to run a structured observation, and how to record findings.

Step 4: Conduct and document the assessment

Run the assessment using the set method and criteria. Then record: the employee assessed, the assessor, the skill assessed, the proficiency rating, the evidence backing the rating, the date, and when the next assessment is due.

For regulated industries, this paperwork is a compliance document. In short, it is what ISO auditors, OSHA inspectors, and other regulators will ask for.

Step 5: Update the skills record and act on the data

Assessment data sitting in a spreadsheet serves no purpose. Instead, feed results into the skills inventory, compare against role needs, surface the gaps, and connect to development actions. Ultimately, this is the step that turns assessment from an admin task into a workforce management tool.

What makes skills assessment data reliableCopied

Three common reliability failures produce data that looks complete but cannot be trusted:

- Self-assessment as the main method. In general, self-rated skill levels overstate actual proficiency. As a result, in day-to-day and regulated settings, using self-report as the basis for deployment decisions is a compliance risk. In short, self-assessment belongs in the conversation stage, not the evidence stage.

- No set proficiency criteria. A scale with numbers but no behavioral anchors produces ratings that mean different things to different assessors. Therefore, define what each level means in observable, specific terms before any assessment begins.

- No evidence requirement. A rating without evidence is just an opinion. As such, the firm should be able to produce a real record, whether an observation form, a test result, or a certificate, to back every proficiency rating in the skills inventory.

Skills assessment in regulated industriesCopied

For manufacturing, healthcare, aerospace, drug production, logistics, and construction, employee skills assessment is a regulatory must.

| Standard | Skills assessment requirement |

|---|---|

| ISO 9001 Clause 7.2 | Documented evidence of skill for all roles affecting quality |

| ISO 45001 Clause 7.2 | Documented skill evidence for safety-critical roles |

| FDA 21 CFR Part 11 | Electronic records with immutable audit trail, e-signatures, and locked history |

| IATF 16949 | Layered skill needs at process level, not just role level |

| OSHA 29 CFR 1910 | Training records for forklift, LOTO, respiratory protection, hazard communication; evidence must come before the task |

In all of these contexts, “we ran a training course” is not the evidence. Instead, “this employee was judged against set criteria, achieved Level X, signed off by [name], on [date], with this evidence attached” is the evidence.

A training matrix helps regulated firms map training needs to roles and track completion status alongside assessment records, keeping both views in sync in a single system.

How AG5 manages employee skills assessmentCopied

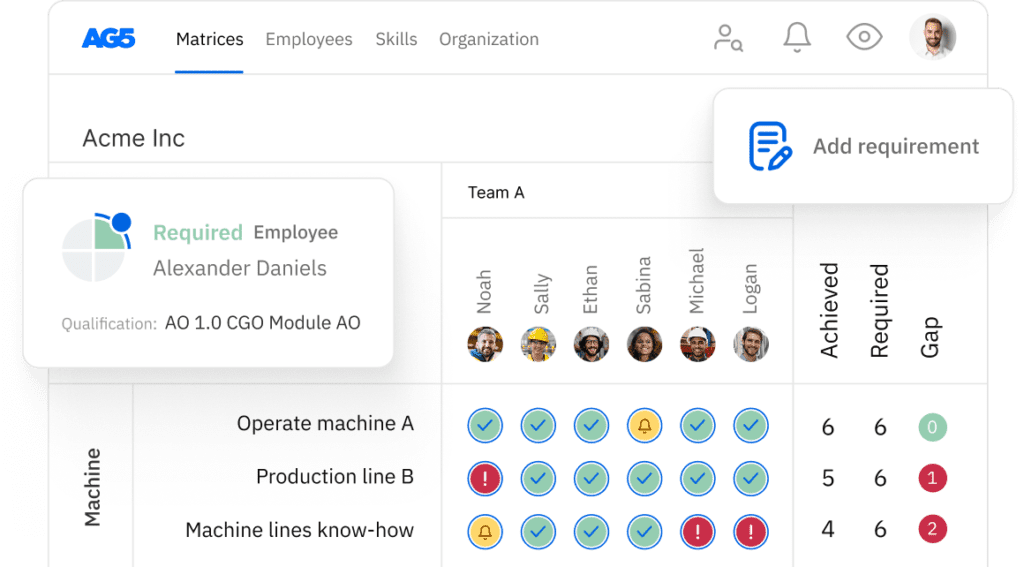

AG5 is built specifically for ongoing employee skills assessment in day-to-day and regulated settings. As a result, it replaces spreadsheets, paper observation forms, and disconnected training records with a single system that produces audit-ready competency data.

- Built-in skill frameworks. AG5’s Skills Library includes ready-made frameworks for manufacturing, logistics, healthcare, and aerospace, aligned to ISO 9001, IATF 16949, and AS9100. The assessment criteria are built in, not kept in separate documents.

- Manager assessment workflow. Assessors run structured observations using set proficiency criteria on the AG5 mobile app. Then, ratings, evidence, and sign-off are captured digitally. As a result, records update right away.

- Multi-rater assessment support. For skills needing more than one assessor view, AG5 records each rating separately with a combined view for the manager.

- Immutable audit trail. Every assessment record is logged with full attribution and time stamp. As such, the full qualification history is on hand for regulatory checks.

- Assessment scheduling. For skills with set re-assessment cycles, AG5 schedules the next assessment on its own and alerts when re-assessment is due.

- Gap analytics. Assessment data feeds straight into a real-time view of where the workforce sits below required skill levels, by role, team, site, and skill type.

Assessment in AG5 is a continuous workflow, not a one-off event. Furthermore, results feed straight into role-based gap views, giving managers and HR teams a live picture of workforce readiness without manual reporting.

Move from opinions to evidence in your skills assessment. AG5 gives day-to-day teams validated, manager-assessed competency records that hold up under audit and feed straight into workforce decisions. Book a free demo at ag5.com

FAQs Copied

-

What is a job skills assessment?

-

What is the difference between a pre-employment assessment and an employee skills assessment?

-

What are the different types of skills assessment?

-

What is the difference between hard skills and soft skills assessment?

-

Why is self-assessment unreliable for skills assessment?

-

What is a technical skills assessment?

-

What does ISO 9001 require in terms of employee skills assessment?

-

How does AG5 support employee skills assessment?

Author Copied

Revisions Copied

Written by: Rick van Echtelt

Copy edited by: Adam Kohut